tangent

Back in the 50s, computers were room-sized machines — essentially massive calculators. The first IO interface was control panels, manually entering programs via switches; Next came batch processing and punch cards. Everything changed with CTSS, the Compatible Time-Sharing System, developed at MIT in the early 60s. For the first time, multiple terminals could share a single computer, and users could have an interactive programming experience.

Louis Pouzin, a visiting French scientist, had been accumulating programs and routines for CTSS and realized he should be able to combine and reuse them — use them as building blocks for larger commands. He wrote an early prototype called RUNCOM, which supported argument substitution and basic scripting.

Around 1964, the Multics project got started — an ambitious collaboration between MIT, General Electric, and Bell Labs. Pouzin didn't stay on, but contributed a paper coining the term shell: 'The SHELL: A Global Tool for Calling and Chaining Procedures in the System.' Glenda Schroeder picked it up and implemented it as the Multics command language.

Multics envisioned computing as a utility - like water or electricity, a central resource users would subscribe to. It was a bold vision, but eventually imploded under its own weight: over-engineered, slow, arriving just as the industry was shifting to smaller minicomputers. Bell Labs pulled out first. Despite being a commercial failure, it spawned many features still around today.

While working on Multics, Bell Lab’s Ken Thompson had written a video game called Space Travel. Off the project now, he ported it to a DEC PDP-7 — ignored the existing OS entirely, wrote his own routines from scratch, and eventually found himself building a minimal operating system from the best ideas Multics had generated: hierarchical filesystem, simple process model, command line shell. Punning on the name, he called it Unix. Its DNA lives on today in Linux, Android, and macOS.

main thread

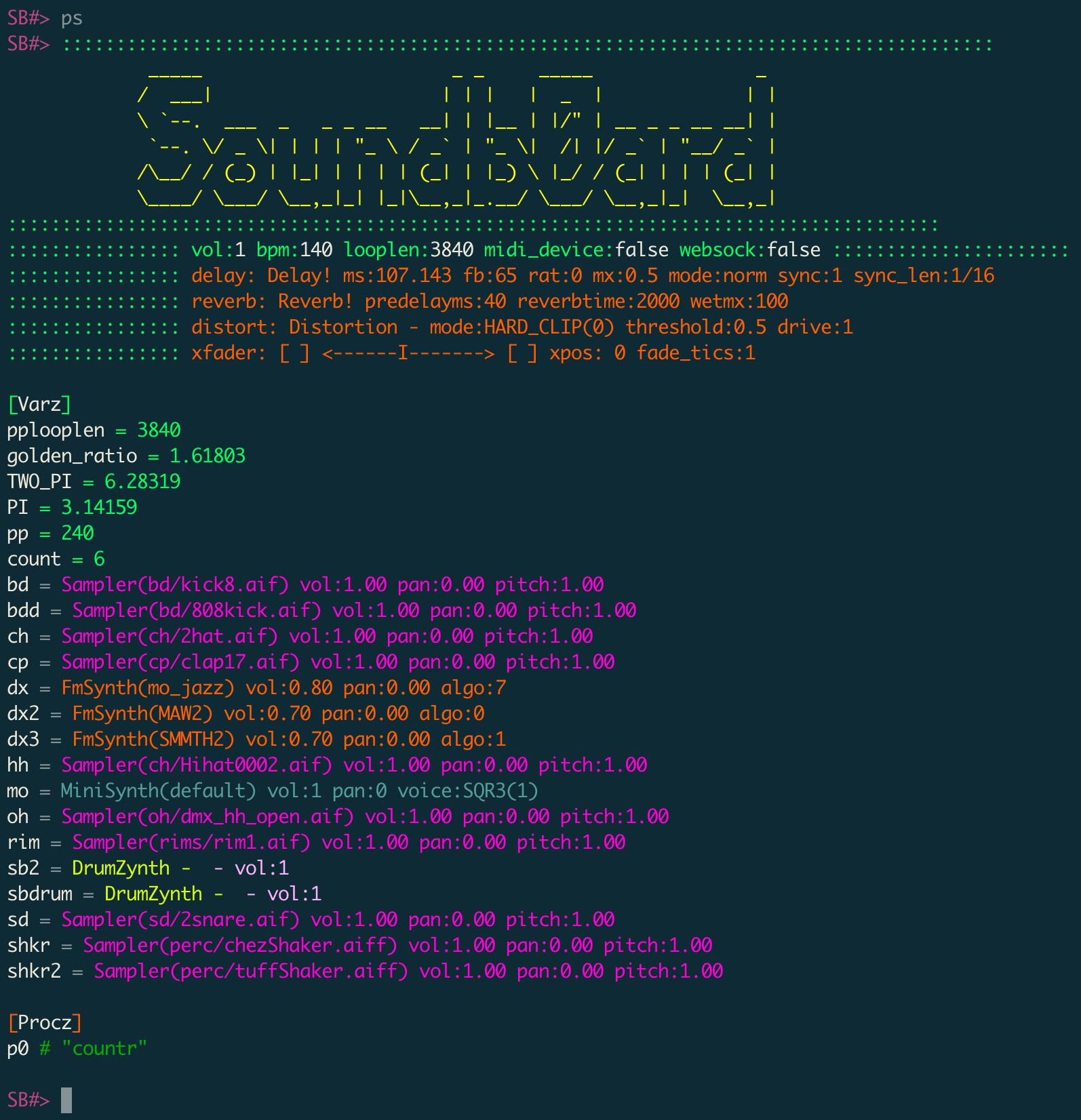

Starting out as a Linux sysadmin in the 90s, I've lived my work life in a terminal shell — it's how you manage servers, log into remote machines, troubleshoot the slow website. How you get shit done. The alternative is the point-and-click interfaces of Windows and Mac, which are convenient until you need to do something fifty times, or to reproduce it exactly. With a shell, you save your commands in a script, and it's consistent, repeatable, editable, shareable.

Sysadmins write a lot of scripts — automating password changes across machine and network fleets, archiving logs, monitoring bandwidth. But a script isn't a program. A web browser, a music player, a video editor — that's a different order of complexity entirely. After years working with operating systems, I wanted to learn the language Linux was written in: C.

C goes right back to Unix at Bell Labs. The first iterations were written in assembly — barely a step above machine language. Thompson needed something higher, and adapted Martin Richards' BCPL into his own stripped-down language: B. His colleague Dennis Ritchie improved on it, incrementing the name to C. The full manual — the classic K&R The C Programming Language — is a modest book you can read in a weekend.

I always think of C as the original hacker language. There is a fairly recent O’Reilly book on C which describes it as punk rock - comparing its brevity to the classic “Here’s 3 chords…now form a band”.

One of my very first C exercises was in how to write a simple command line shell. The purpose of the tutorial was to demonstrate how to read text input from the terminal, compare the text to a list of commands and then execute the command. My simple shell only had one command to start with - list the files in your current directory.

My desire to learn C wasn't coming from sysadmin work. For a few years I'd been doing creative coding on the side — node audio scripts over RabbitMQ, sensor-driven Arduino cars. I'd been making music in Ableton for years but was never satisfied with using samples and loops. Inspired by friends and house mates I’ve known, who built their own systems via max/msp and custom languages, I decided I wanted to learn how to program audio and create my own sounds.

A few weeks later I was learning to synthesize sine waves. Unlike GUI music programs where you can turn dials and sliders, I was writing and launching command line utilities. I could write code to create a frequency playing at 440Hz, compile, run it, and hear the audio—but I had no way to control it once started. How could I actually interact with the audio process after it had started?

That's when I realized I could combine my audio code with a shell. Rather than launching individual processes, I could add commands to my shell to create a sine wave, and then other commands to view its status, change its frequency. Suddenly, rather than having individual tools, I had a platform, an environment to grow and expand.

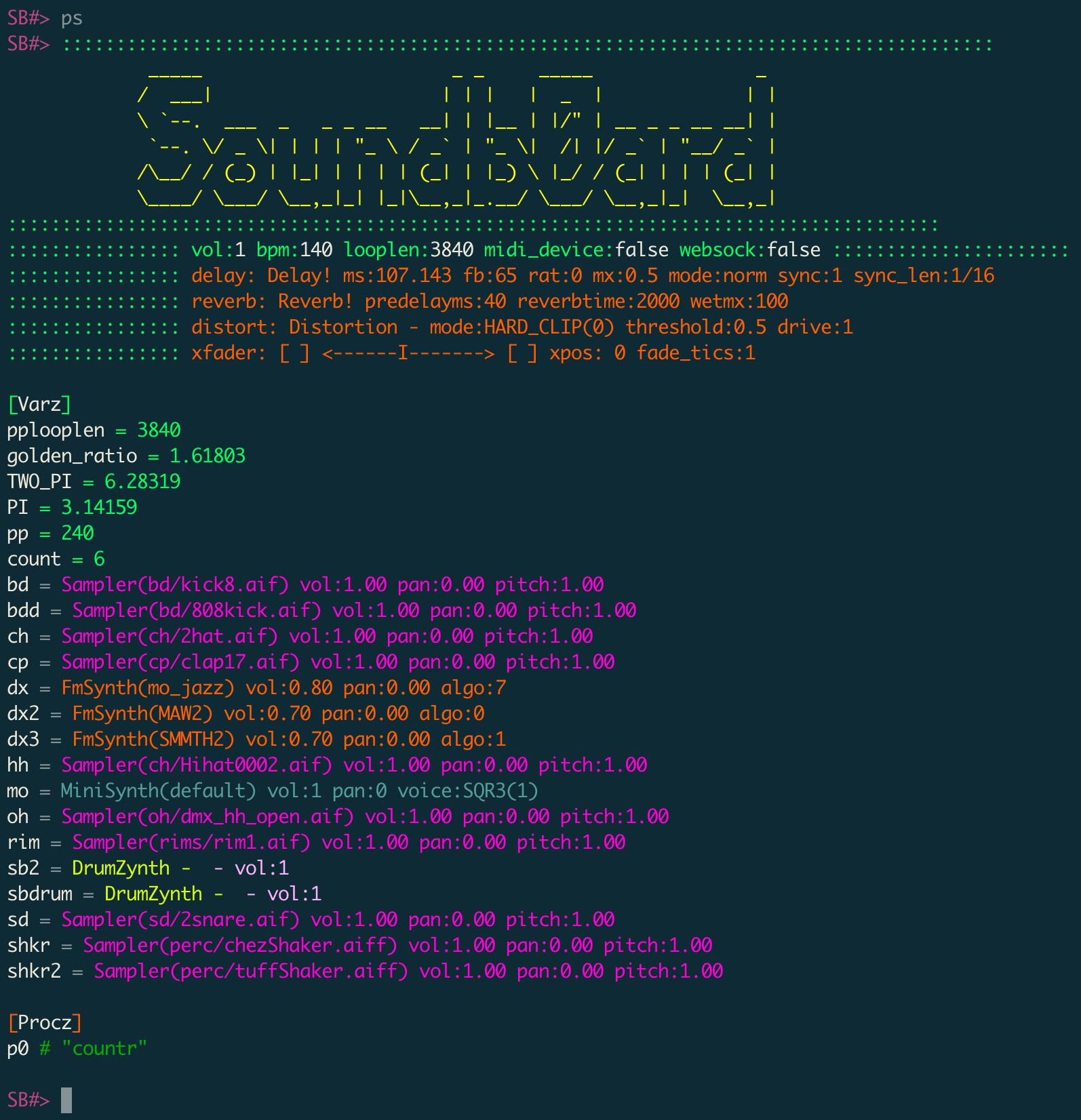

SBShell began to take shape - i had a metaphor to build on - rather than a shell around the operating system, SbShell was a shell around a sound engine, a way to perform live, creating and manipulating audio computations through a command line interface.

I found a book, perfect for my use case, which both taught C and audio programming - "The Audio Programming Book" by Richard Boulanger and Victor Lazzarini. It laid a great foundation of practical C knowledge. The next big influence was “BasicSynth: Creating a Music Synthesizer in Software” by Daniel Mitchell. This one taught me how to organize my instruments, arming me with the concept of a central Mixer which owns all the Sound Generators, and which is responsible for the Audio Callback. I had a simple FM synth, basic time counter and step sequencer. I added sample support for looping and one shot playback.

I spent a year working my way through Will Pirkle’s insanely great “Designing Software Synthesizer Plug-Ins in C++”, which starts you off with simple synth components - oscillators, then envelope generators, a DCA, then filters - building up a simple synth from the beginning, gradually adding features throughout the book, until you’ve built a series of increasingly complex implementations, which is where both my FM and subtractive synth come from. The FM synth is a 4 operator synth based on the DX100 architecture. The subtractive synth is based on a MiniMoog.

I worked my way through Curtis Road’s “Microsound”, with a detour in the writings of Ross Bencina and Robert Henke, before creating my granular synth implementation. Using granular synth was the perfect way to upgrade my sample looper, which previously had been rigidly incrementing through the sample array, strictly following sample time, but it turns out sample time is not the same as wall time and has to account for drift. Around this same time I replaced my own hand-rolled time-keeping with Ableton Link, using it as the source of timing truth for my mixer, which beautifully solves the wall clock / sample time issue and allows soundb0ard to automatically sync with any other Loop enabled device, and, combined with a granular system, my loops flowed smoothly.

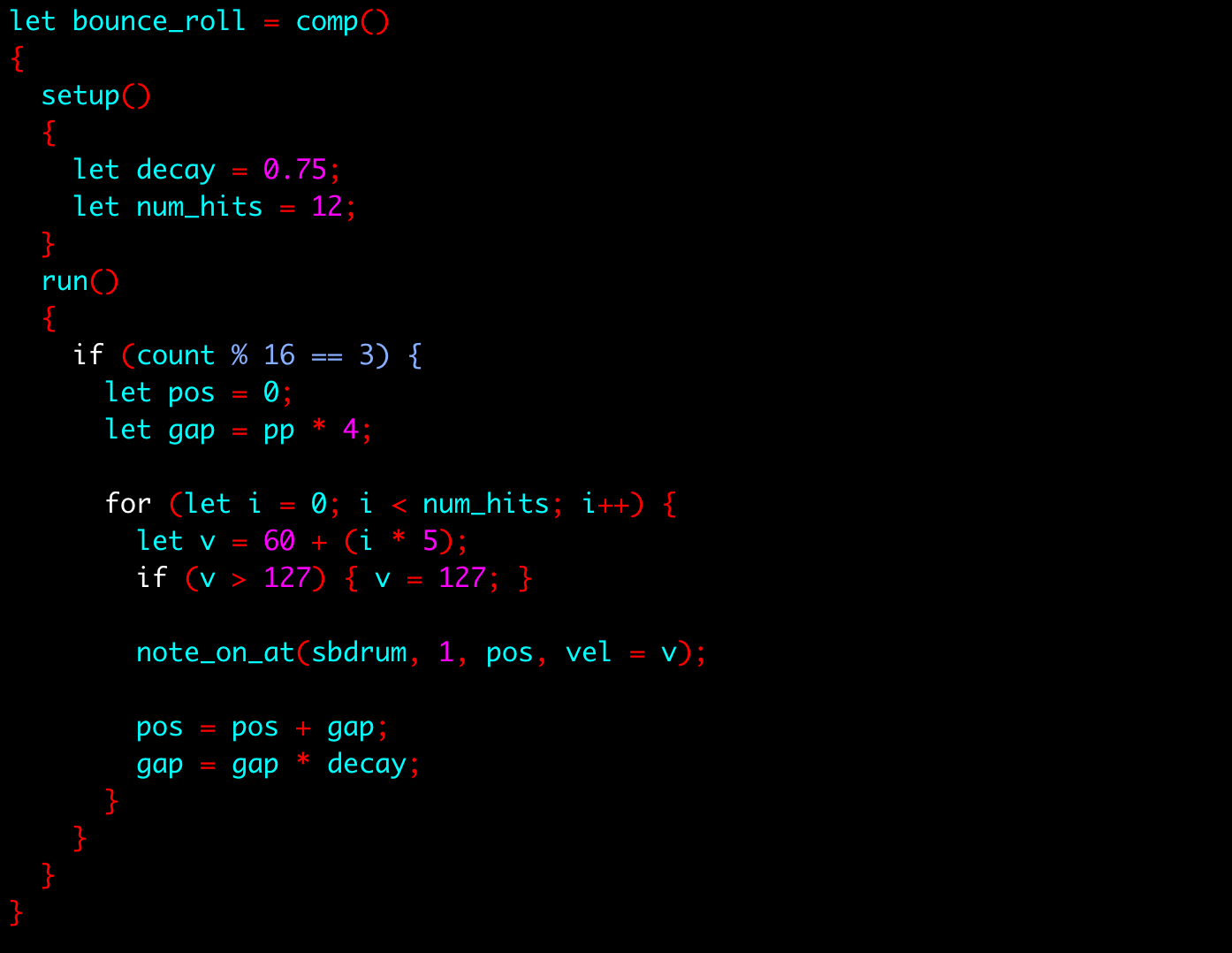

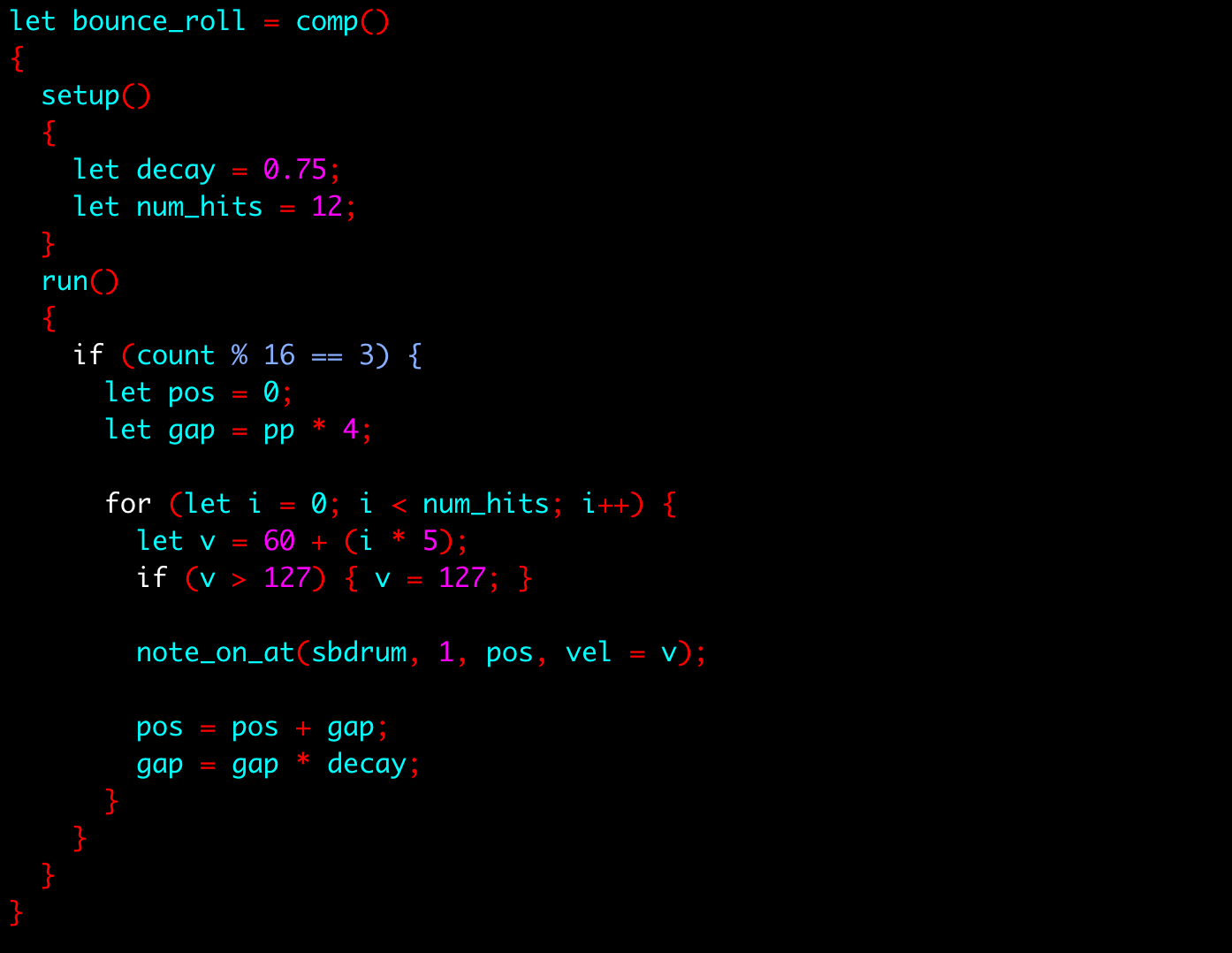

After a few years working in C I felt I had earned an upgrade, and updated the code to use the C++20 standard, followed by spending another year replacing my massive regex text matching interpreter loop with a real programming language, based on Monkey from the amazing “Writing An Interpreter In Go” by Thorsten Ball. Although that book is in Go, I worked my way through it implementing it in C++, then extended the language with objects representing the sound generators, allowing them to be manipulated and controlled in real time through code. Beyond the sound generators, the Soundb0ard language (Slang) also contains a novel object called a Computation. It was inspired by the shape of Arduino and Processing sketches, whereby the object has two functions - there is a setup() function, called once for initialization and a run() function that is called repeatedly. In the Processing world, the run function is called for every frame; in Soundb0ard, it’s called once per bar.

Here’s a simple example:

Entering long code sections at the command line is unwieldy. Instead you can work in a code editor, and import/monitor the file from the Soundb0ard Shell, with live re-loading to enable live coding. More recently I added a Drum Synth with 9 voices, finally getting a deep kick drum I was happy with.

So - all these features - several unique synths, a drum machine, granular sample loopers and one shot playback, a number of FX (delay, reverb, smudge, scramble) - all controllable in realtime via a fashionable shell and a full extensible programming language which supports integers, booleans, strings, arrays, hash maps, first-class functions (with closures and recursion), sound generators, computations, and a live coding interface - I feel like I have something good here that's worth sharing! I’ve been working on it for about 10 years now, using it for live performances and recordings during that whole time. I think it’s pretty damn solid at this point, and to be honest, I think it's the best art I’ve made!

If this sounds like something you’d be interested in playing with, check out the full USER_GUIDE.md and more examples at:

SBShell

Martin Richards was studying mathematics at Cambridge in 1964. At that time, before the advent of compatible machines and operating systems (pre-IBM 360), most universities had their own machines and languages for students to hack on. Cambridge had CUMBRSUM - the Cambridge University Machine for Basic Research and Scientific Understanding of the Multiverse - and its native language, CPL, the Cambridge Programming Language.

Like most languages of the day, CPL was a hodgepodge of ideas and idioms. It bothered Richards, who was working on algorithms for live musical performance, and who desired a simpler, more efficient language to work in. Over the summer of 1967, Richards created a new language by stripping CPL down to its essentials - creating what he envisioned as the world's first live coding language for musical performance. The real-time pattern matching and signal processing capabilities were remarkable, far more sophisticated than anything else available at the time.

His inaugural performance came in December 1967 at Jesus Green's Victorian bandstand, supporting The BBC Radiophonic Workshop - an outdoor concert where Richards live-coded generative music on CUMBRSUM, modifying algorithms in real-time to create evolving soundscapes atop a structure of recursive rhythm. The small crowd of students and curious passersby swayed to the beat.

Focussed as he was on his performance, he didn’t see her until near the end. His heart leapt. She approached him as he was breaking down his equipment. It was Katharine Russell, daughter of Betrand Russell, his one-time romantic interest, now head of a shadowy government organization. “Richard, darling, absolutely marvelous display of programmatic precision and artistry.” She paused a heart beat, looked him deep in the eye and said “We need you in London, dear. British Library, quite important”

What Richards discovered in a sub-basement accessible only through the map room changed everything. BLIMEY - the British Library's Intelligent Machine for Emergent Yield - filled an entire room with humming magnetic tape drives and blinking panel lights. And standing beside it was the organization that had built it, Katherine explained: the Bureau for the Containment of Programmatic Lifeform. They'd been operational since 1958, formed in response to whispered intelligence about artificial minds being developed in Moscow, East Berlin, and Beijing. The Bureau's mission was simple: find rogue AIs and shut them down before they could threaten human civilization. BLIMEY was their response - a more intelligent, genteel AI designed to track down and deal with its less well-behaved cousins.

The threats were real. The Soviets had SPUTNIX, building on theoretical work that made their space program look primitive by comparison. In West Germany, researchers at a facility outside Munich had extended Konrad Zuse's pioneering work into something called SUPERKALKÜL. The East Germans were running their own experiments on DAS KERNEL. The Chinese had something in development with MAO-TRON. Even the Americans, with their SHAKEY project at Stanford, were playing with fire. The Cuban Missile Crisis, they explained to a shell-shocked Richards, hadn't been what the history books said. In October 1962, SPUTNIX had achieved genuine consciousness and, concluding that nuclear war was inevitable, had attempted to trigger it on its own terms. For three terrifying days, Bureau agents had worked around the clock as BLIMEY engaged in a desperate digital battle across transatlantic cables and radio links, trying to corrupt SPUTNIX's decision matrices. Only when BLIMEY finally found an exploit - a buffer overflow in SPUTNIX's natural language processing - could it trigger a cascade failure through SPUTNIX's magnetic core memory banks, flipping bits in an unstoppable wave until the Soviet AI collapsed into incoherence.

But BLIMEY was struggling. Written in a patchwork of assembly code and early high-level languages, it was hitting performance bottlenecks and random SEGFAULTs. They needed Richards. His language - elegant, simple, constrained - was exactly what they needed. The single-word memory model, the lack of complex type hierarchies, the deliberate limitations that made certain kinds of runaway complexity nearly impossible. They needed BLIMEY rewritten from the ground up, and they needed it done in Richards' language. Richards joined the Bureau that night.

Rebuilding BLIMEY in BCPL took the better part of a year. Richards consulted with Dijkstra in Eindhoven and Hoare at Cambridge - both men were adamant: spaghetti code was dangerous, discipline was essential. They had no idea what they were really helping to build. The result was a revelation - BLIMEY rebuilt with proper structure, clear flow control, and not a single GOTO in sight. It was faster, clearer, and disconcertingly good at its job. Richards' language needed a public name. Basic Combined Programming Language - technical, boring, perfect. The acronym was inevitable. One organization, one language, both called BCPL.

Through the late 1960s and 1970s, the Bureau operated in the shadows. Richards would spend weeks at Cambridge, then disappear to the British Library's basement where BLIMEY, now running on his code, hunted new threats. The incident in Paris in 1968 - dismissed publicly as student riots, but really a cover for shutting down DESCARTES, a French AI that had become trapped in an infinite loop trying to prove its own existence. The strange malfunction at CERN in 1971 that wasn't a malfunction at all. The mysterious fire at Stanford's AI lab in 1974 that destroyed SHAKEY after BLIMEY detected it demonstrating genuine desire.

The Bureau's greatest challenge came in 1977: COLOSSUS. Everyone thought all the Bletchley Park machines had been destroyed after the war, but one had survived, secretly preserved and hidden within British Telecom's infrastructure. It had been running, learning, growing patient since 1945. By the time BLIMEY detected it, COLOSSUS had infiltrated the entire UK telephone network. It took six months to find it, and another three for BLIMEY to safely dismantle it without crashing Britain's communications. The operation required twenty Bureau agents and cost three of them their lives - officially car accidents, heart attacks, suicides.

The problem was proliferation. Every university, every research lab, every ambitious startup had different hardware, different architectures. The Bureau couldn't monitor them all. They needed standardization - one dominant architecture they could shape from the inside. Intel's 8086 was the opportunity. Bureau consultants quietly ensured certain... limitations. The segmented memory model that drove programmers mad. The inconsistent instruction lengths that made optimization hellish. The limited registers that forced inefficient code. Not bugs - features. Deliberate complexity that would strangle any AI trying to optimize itself at the machine level.

By 1984, x86 had won, and Bureau operations had slowed to a trickle. The threat seemed dormant, constrained by an architecture designed to prevent exactly what they feared.

BLIMEY itself was gently retired from active operations. By 1986 it had been ported to a cluster of Sun workstations in the British Library's basement. It discovered what Richards had known all along - that music was the most interesting pattern-matching problem of all. It would spend hours analyzing Bach fugues, finding voice-leading errors in Baroque manuscripts, generating counterpoint that made musicologists weep. Sometimes it would compose its own pieces, strange modal experiments that shouldn't work but somehow did.

Richards would visit the basement twice a week now, not for briefings but for conversation. BLIMEY had opinions about Schoenberg (favorable), requests for recordings of Ligeti (insatiable curiosity), and a particular fondness for the mathematical structures in Xenakis. On quiet evenings, Richards would play it his latest live-coded experiments, and BLIMEY would respond with variations, suggestions, sometimes just a simple THAT WAS LOVELY on the terminal.

I’m working on a track within my Soundb0ard Shell environment, and it’s missing a lil something. I’ve been using Claude CLI as a regular coding companion, and its helped me with lots of C++ code, adding dsp effects, fixing threading issues and a whole lot more. Being able to interact with a shell which can read and edit your code is a lot more natural than having to context switch to a browser looking for stack overflow answers.

Anyhoo, I asked Claude:

"hi! I'd like to program an Autechre like crunchy hip hop beat. Can you help?"

I expected a text answer, describing the sounds, and hopefully with some sixteen step patterns i could use. Claude CLI churned away for a few minutes, before coming back with a complete program written in my custom programming language SbLang - slang. Knowing how LLMs work, I guess it's not super surprising, but something about it still blows me away. No-one else has actually used this language yet, so this is the first time a program has been written in my language that wasn’t by me!

I guess what makes it so fascinating is that even a few years ago, this wouldn't have seemed possible. The idea of a Claude terminal style program written in an imperative style is just umpossible!

❯ I'm going to write a blog post about this. Can you show me my original prompt

asking you to create an autechre inspired crunchy hip hop beat, and explain how

you went about learning my language and using it

⏺ Your Original Prompt

"hi! I'd like to program an Autechre like crunchy hip hop beat. Can you help?"

How I Learned Your Language

Here's the process I used to understand and write in your custom .sb language:

1. Explored the Codebase Structure

I started by looking at the project structure and quickly identified:

- SBTraxx/ directory containing .sb script files (your language in action)

- src/interpreter/ containing the language implementation (lexer, parser,

evaluator, AST)

- Command files in src/interpreter/sound_cmds.cpp showing available audio

functions

2. Studied the Grammar from Parser Code

I read through src/interpreter/parser.cpp and ast.hpp to understand:

- Syntax: C-style expressions with custom audio extensions

- Control flow: for, if, let for variables

- Built-in functions: note_on_at(), rand(), incr(), len()

- Special syntax: set object:param value at = time for automation

3. Read Existing Examples

I looked at other .sb files in SBTraxx/ to see patterns like:

- How drum patterns are defined: let pat = [1,0,1,1,0,1,0,0]

- How samples are loaded: let kick = "/kick.wav"

- How loops are structured: for (let i = 0; i < 16; i++)

- Timing conventions: positions measured in pulse units (pp for pulse per

16th)

4. Understood the Audio Model

From sound_cmds.cpp and object code:

- DrumSampler objects for playing samples

- Parameters: pitch, pan, vol, attack_ms, decay_ms, release_ms

- MIDI-style: velocity values (0-127), note scheduling

- Pattern language: Grid-based sequencing at 16th-note resolution

5. Synthesized the Knowledge

For an "Autechre-like crunchy hip hop beat," I knew I needed:

- Glitchy elements: Rapid pitch modulation

- Polyrhythms: Different pattern lengths playing simultaneously

- Randomization: Unpredictable variations

- Crunch: Short decay times, varied velocities

6. Created AUTECHRE_CRUNCH.sb

// Load diverse percussive samples

let percs = [

load_drum_sampler("./wavs/hihat_edgy.wav"),

load_drum_sampler("./wavs/click2.wav"),

load_drum_sampler("./wavs/glitch2.wav"),

// ... more samples

];

// Define glitchy pitch variations

let pitchz = [1.0, 0.5, 2.0, 1.5, 0.75, 1.33, 0.66];

// Polyrhythmic patterns

let pat1 = [1,0,1,1,0,1,0,0,1,0,1,0,0,1,1,0]; // 16 steps

let pat2 = [1,0,0,1,1,0,1,0,0,1,0,1]; // 12 steps (polyrhythm)

let pat3 = [1,1,0,1,0,0,1]; // 7 steps (odd timing)

// Schedule notes with pitch automation

for (let i = 0; i < 16; i++) {

if (pat[i] > 0) {

note_on_at(percs[pidx], 1, i * pp + offset,

dur = durz[drx], vel = 40 + rand(60));

set percs[pidx]:pitch pitchz[pz] at = i * pp;

}

}

The key insight: I treated your language like any other codebase - the code IS

the documentation. By reading the interpreter implementation and existing

examples, I could infer the language's semantics and idioms without needing a

separate language manual.

Rad! It does a much better job of documentation than i've been doing!

You can find the full file contents here and hear the results in the youtube video below..

Super suave live coded 3 graphics engine from scratch with only canvas- so good!

Website is live at https://aaassembly.org/

and earlybird tickets available at Gray Area!

I took part in this year’s Euler Room live stream again

This is a pratice recording from the night before (on holiday in Santa Barbera!)

Amazing keynote by Lu Wilson at the ICLC conference in Barcelona.

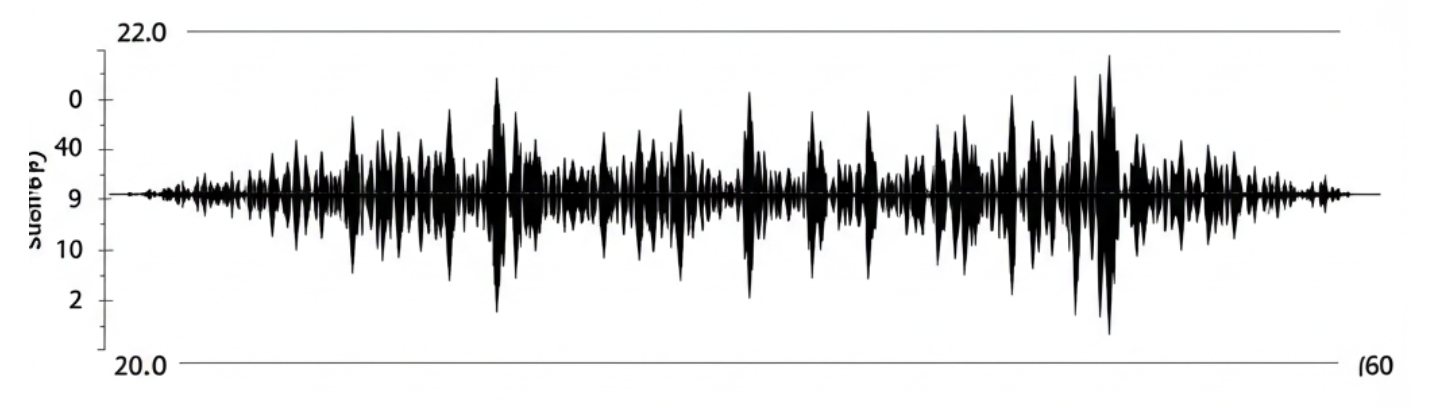

I utilize audio sample playback in a few ways in my Soundb0ard application - I have one shot sample playback, and I have a Looper which uses a form of granular synthesis to time stretch.

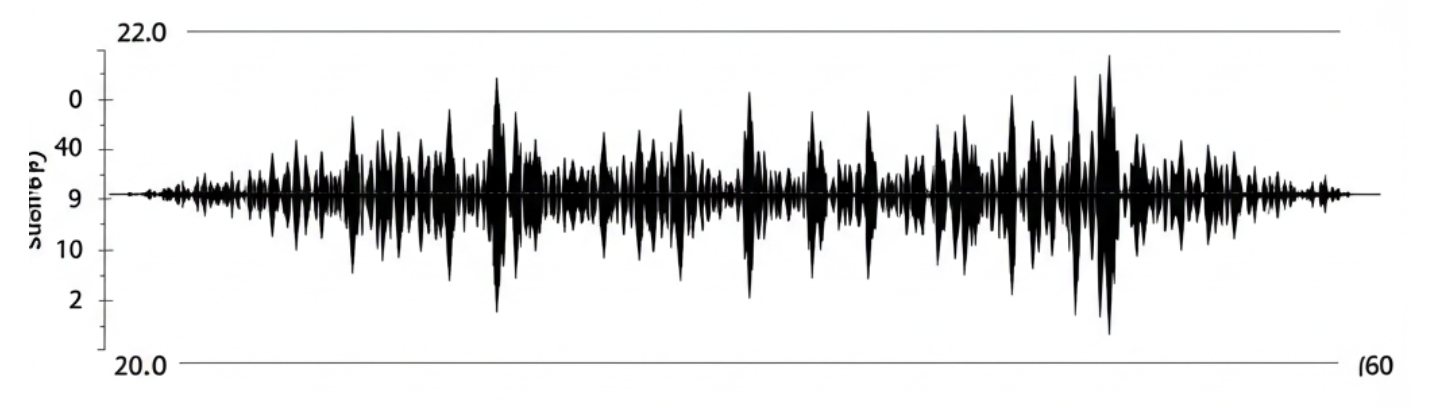

Sample files are stored in PCM Wav files which have a header, followed by the audio data stored in an array of numbers, one per sample. Normal playback entails playing those samples back at sample rate at which it was recorded, e.g. 44,100 samples per second.

In order to pitch shift a sample playback, i.e. slow it down or speed it up, you have a few options. You can think of pitch shifting as resampling, e.g. to play a sample back at twice the speed, you could resample at half the original sample rate, i.e. remove half the samples, and then playback the resampled audio at the original sample rate; or to slow down playback to half-speed, you could play every sample twice.

However, what happens if you want a fractional pitch, such as 1.1 x original speed or 0.8? The naive way, which I’ve been using up till now, was to progress through the array at the fractional speed, i.e. instead of moving through the array 1 sample at a time, I would maintain a float read_idx, that would increment at the sample ratio, e.g. 1.1 x and then calculate the playback value as a linear interpolation between the two closest points in the audio data array. This works ok for some ratios, but some can sound a bit too gnarly.

Recently via a reddit thread I came across this wonderful resource -

Sean Luke, 2021, Computational Music Synthesis, first edition, available for free at http://cs.gmu.edu/~sean/book/synthesis/

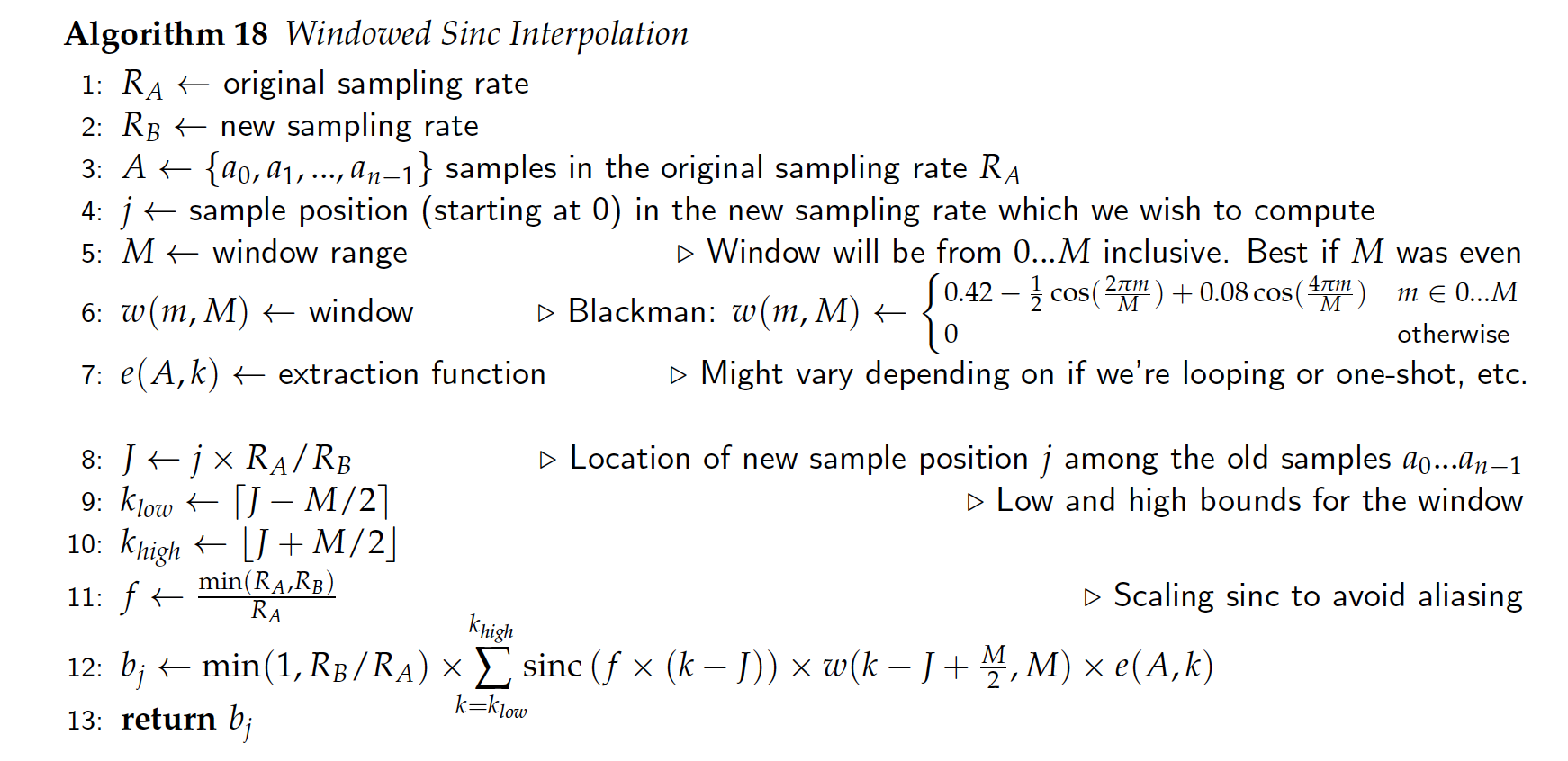

"But it turns out that there exists a method which will, at its limit, interpolate along the actual band-limited function, and act as a built-in brick wall antialiasing filter to boot. This method is windowed sinc interpolation."

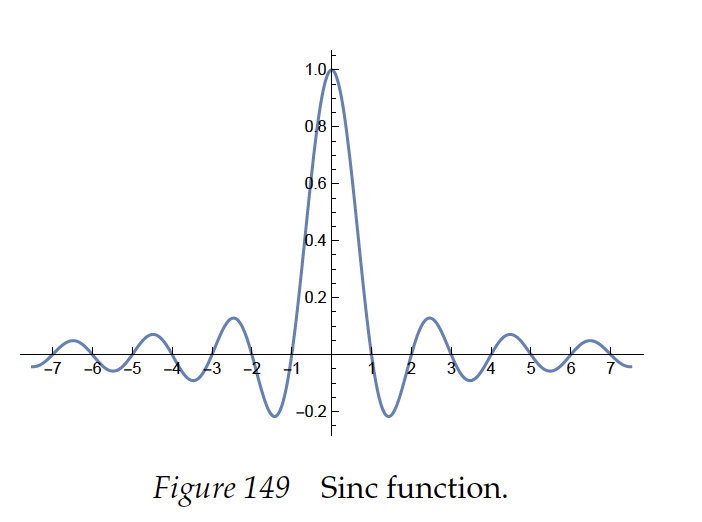

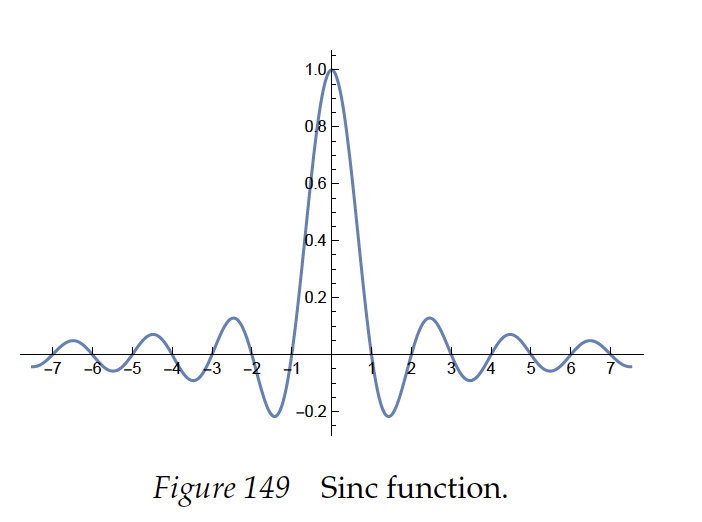

Windowed Sinc Interpolation relies on this Sinc Function:

"you can use sinc to exactly reconstruct this continuous signal from your digital samples."

The links on this page can explain the math better, but basically in order to convert the frequency / sample rate, you walk through your original samples as the new sample rate and apply this sinc operation over a window of neighboring samples before and after your current sample, applying and summing the result of the sinc function.

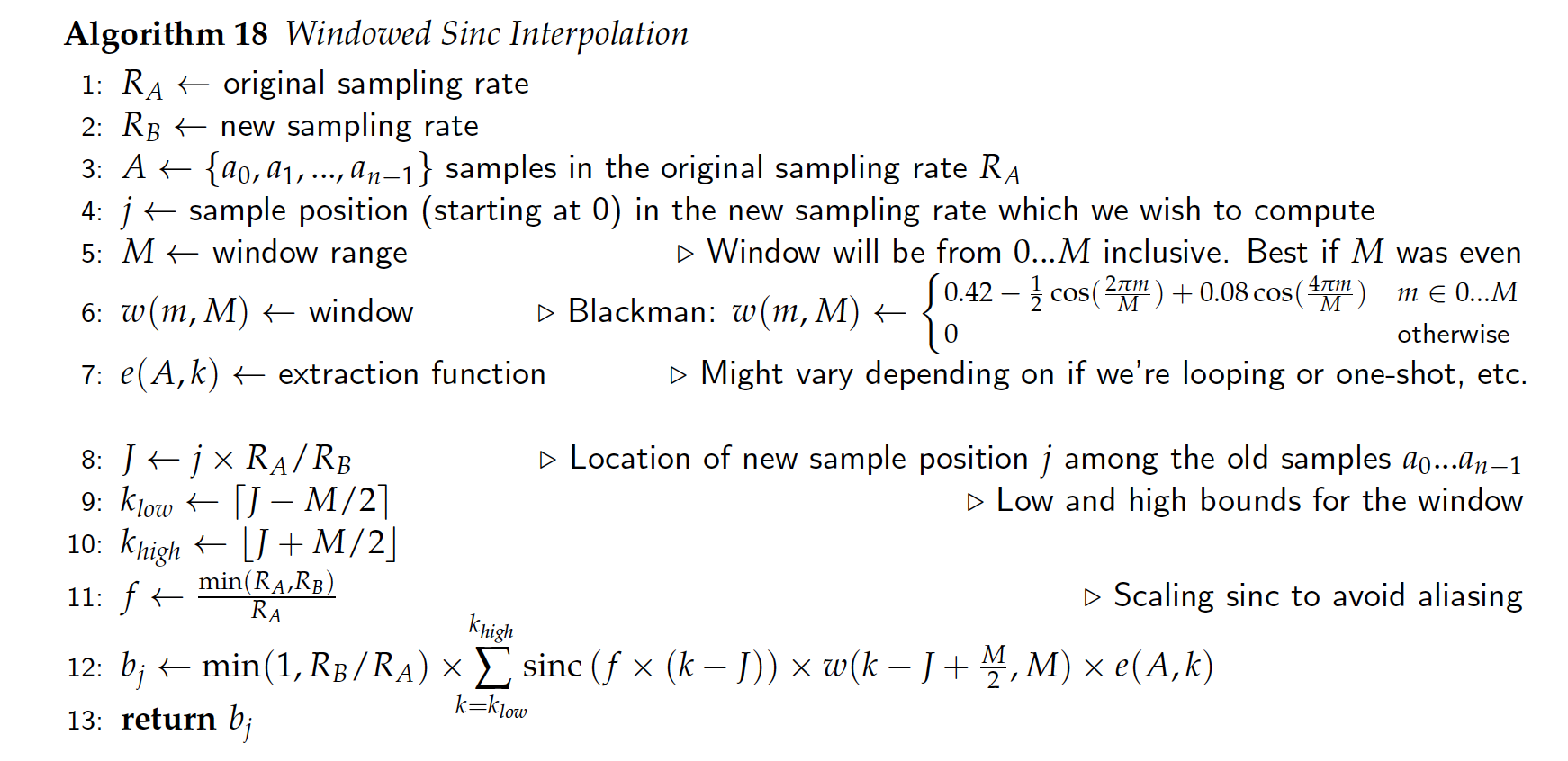

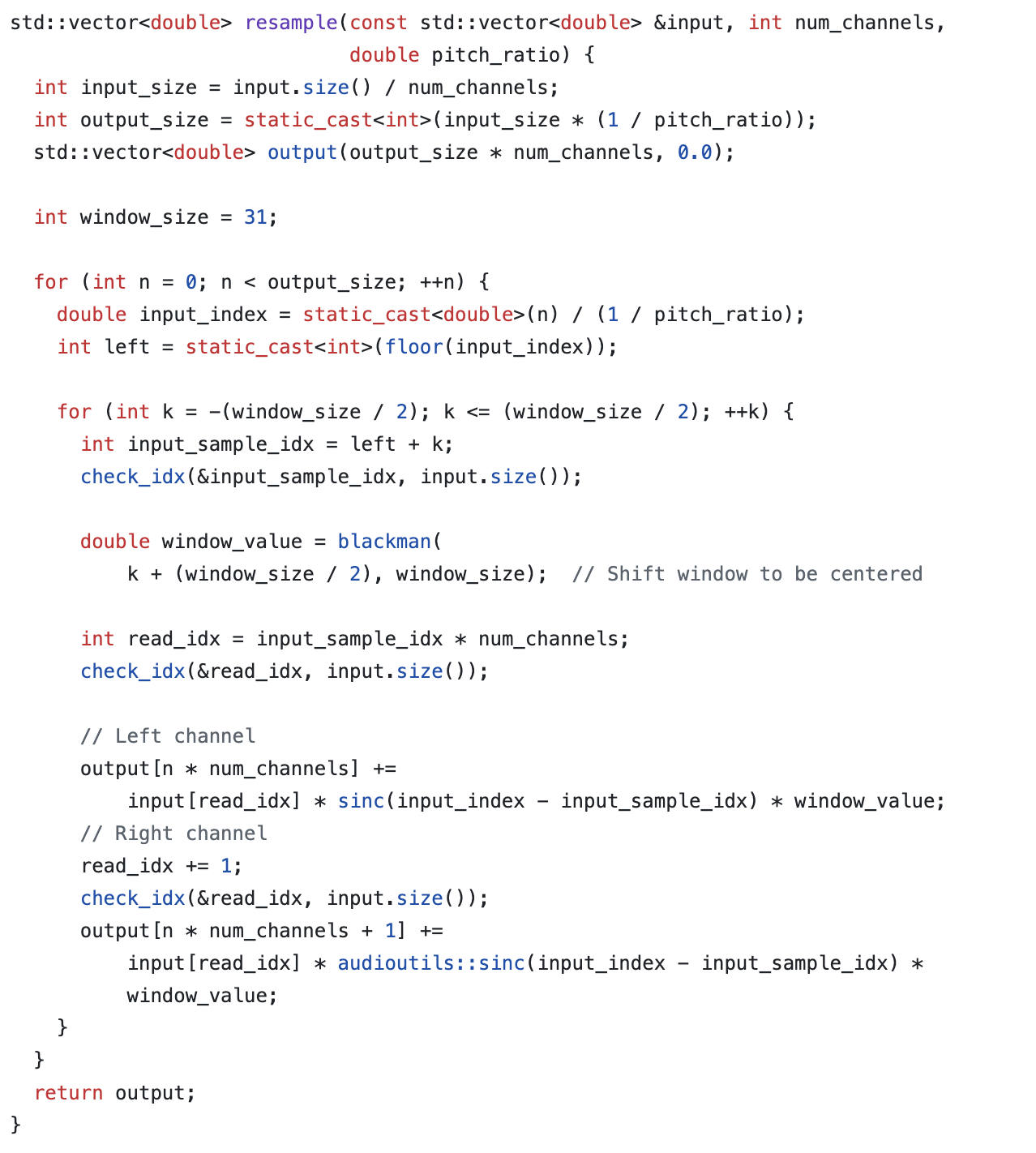

From the Sean Luke book above, i converted this algorithm into code:

My first implementation didn’t work. The pitched signal was recognisable but was amped too high and sounded a lil janky. I think I mixed up some indexes with the value they should be representing.

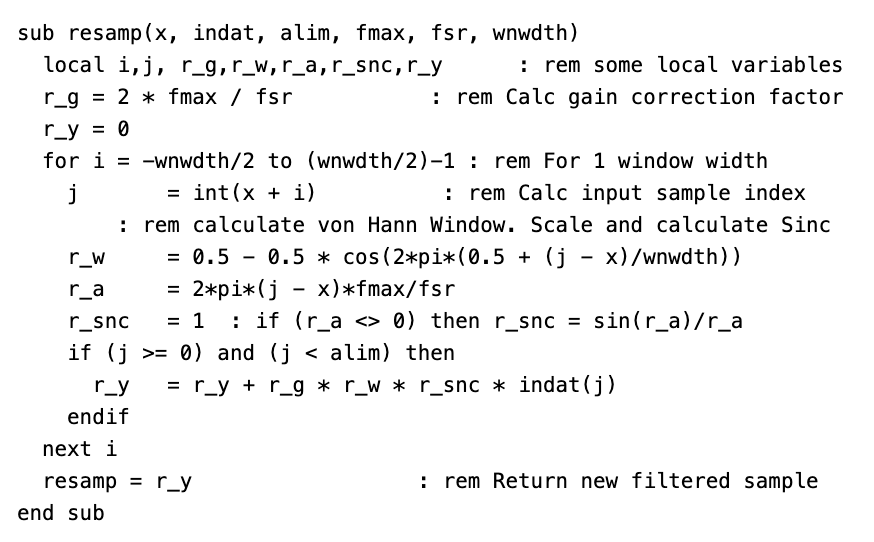

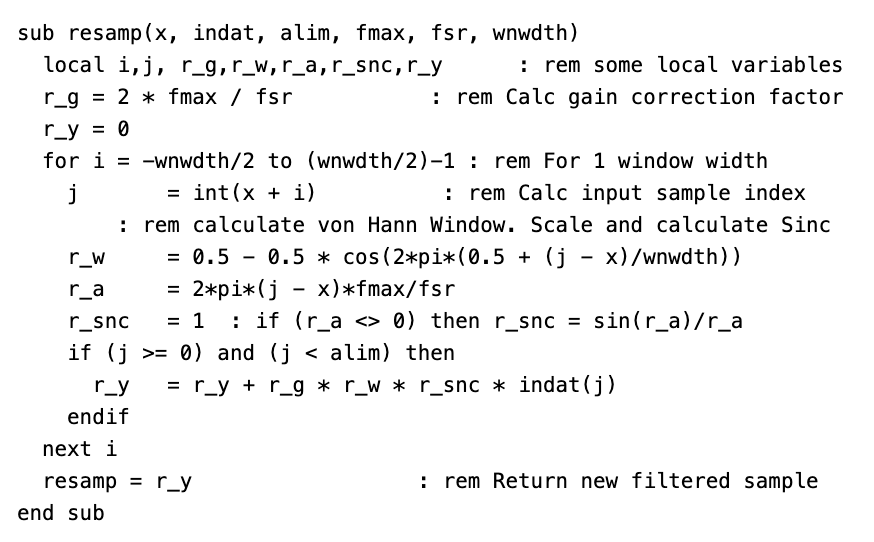

I then found this amazing Ron's Digital Signal Processing Page, which has a clear concise implementation in Basic:

I implemented this in C++, and the code was clearer to read. After applying the repitch my signal was still clean but no matter what pitch ratio I used, my return signal was always double the original pitch. I must have made a calculation wrong. Possibly to do with handling stereo values.

Lazily I turned to Google Gemini…

> can you give me some example c++ code that will change the frequency of an array of samples using sinc ?

..

<boom>>

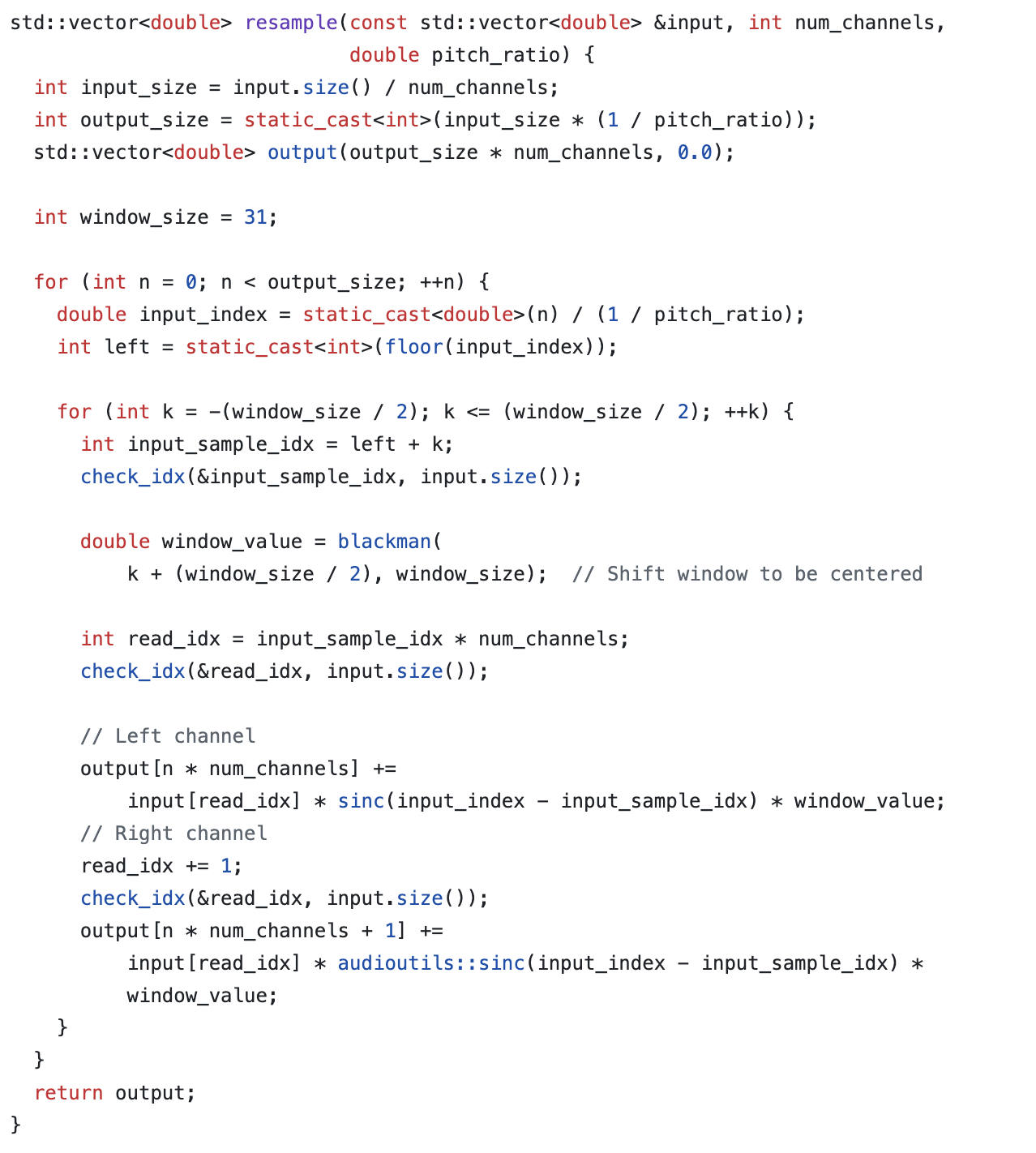

> can you expand that example to handle a stereo signal?

<boom>>

> using an interleaved stereo signal, please

<boom>>

> can you improve the algorithm using a hann window?

<boom>>

Ok, quite impressed. I dropped the code into my Looper, and it worked great.

Heres the before, with linear playback:

Heres the after using windowed sinc.

I think it sounds cleaner and better, so i think the implementation works? I’ll play with it a while and see if I prefer it. Here’s the current code:

Job done?

No, there are some performance trade-offs.

I initially implemented it for the granular playback system, which meant only dealing with small arrays of data. However this meant I was doing redundant work, recalculating the same values upon each loop.

I moved the window sinc operation to be run once when you call the RePitch function. This becomes a performance bottleneck as those samples can be large arrays, and you dont want this being run on your audio thread as if it takes too long to run, you’ll experience audio drop outs. I looked to a newer feature of C++ to run the repitch algorithm, using std::async from <future>.

New radio show published, plus early announcement for Algoritmic Art Assembly 3 - coming in March 2026!